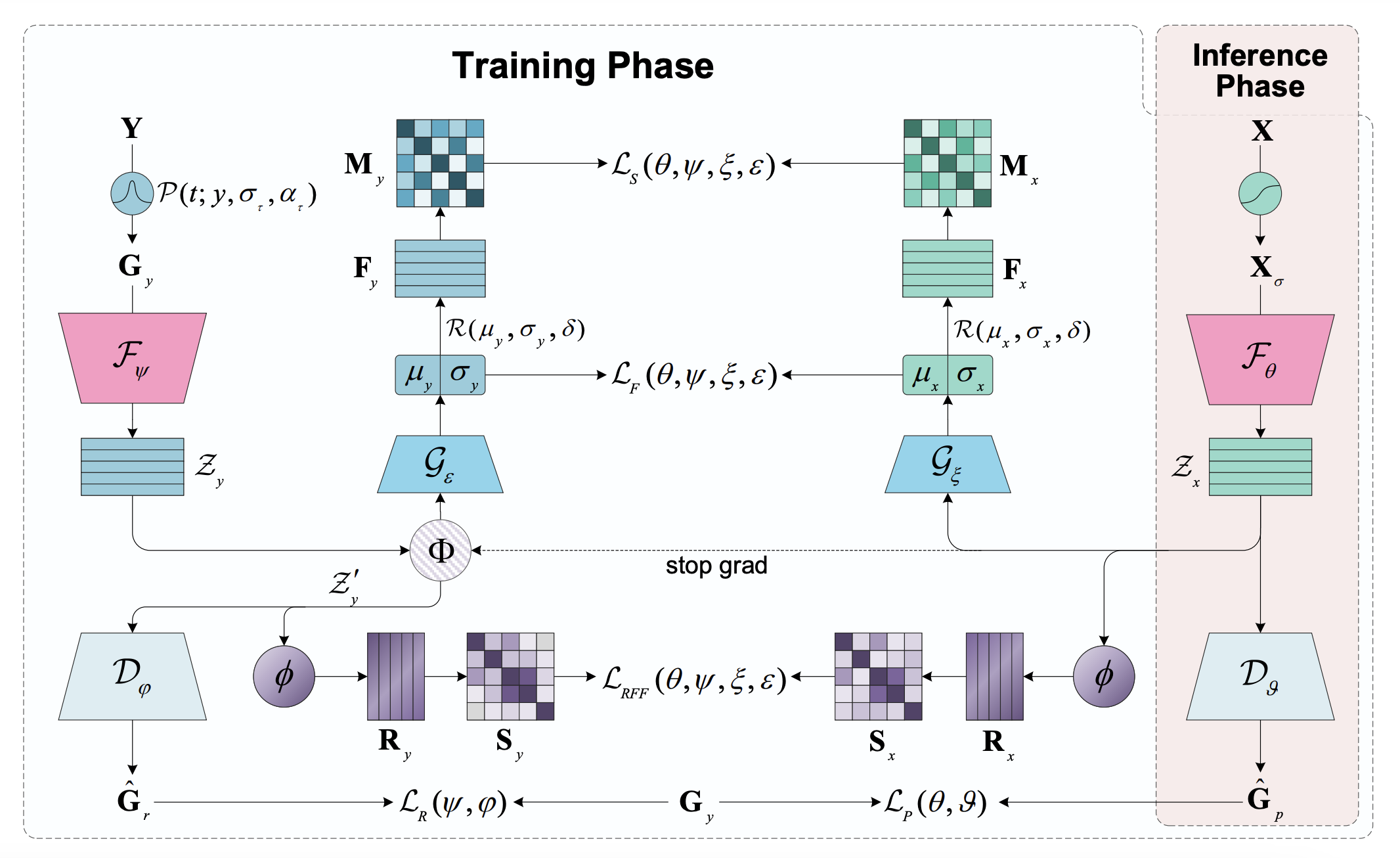

Label Noise-Robust Learning (LNRL) approach was designed for handling label noise in microseismic tasks with small-scale datasets. LNRL aligns feature representation and label representation distribution in multiple feature spaces, learns the correlation between instances and label noise, and mitigates the impact of label noise.

The code of this project is developed based on SeisT.

-

For training and evaluation

Create a new file named

mydata.pyin the directorydataset/to read the metadata and seismograms of the dataset. And the@register_datasetdecorator needs to be used to register the custom dataset.(Please refer to the code example

datasets/sos.py)

-

Model

Before starting training, please make sure that the model code is in the directorymodels/and register it using the@register_modeldecorator. All available models in the project can be inspected by using the following method:>>> from models import get_model_list >>> get_model_list() ['lnrl','seist']

-

Model Configuration

The configurations of the loss functions, labels, and the corresponding models are inconfig.pywhich also provides a detailed explanation of all the fields. -

Start training

To start training with a CPU or a single GPU, please use the following command to start training:python main.py \ --seed 0 \ --mode "train_test" \ --model-name "lnrl" \ --log-base "./logs" \ --device "cuda:0" \ --data "/root/data/Datasets/SOS" \ --dataset-name "sos" \ --sigma 600 \ --data-split true \ --train-size 0.8 \ --val-size 0.1 \ --shuffle true \ --workers 8 \ --in-samples 6000 \ --augmentation true \ --epochs 200 \ --patience 30 \ --batch-size 300

Use

torchrunif training with multiple GPUs.There are also a variety of other custom arguments which are not mentioned above. Use the command

python main.py --helpto see more details.

Use the following command to start testing:

python main.py \

--seed 0 \

--mode "test" \

--model-name "lnrl" \

--log-base "./logs" \

--device "cuda:0" \

--data "/root/data/Datasets/SOS" \

--dataset-name "sos" \

--data-split true \

--train-size 0.8 \

--val-size 0.1 \

--workers 8 \

--in-samples 6000 \

--batch-size 300It should be noted that the train_size, val_size, and seed in the test phase must be consistent with that training phase. Otherwise, the test results may be distorted.

This project refers to some excellent open source projects: PhaseNet, EQTransformer

Copyright S.Li et al. 2024. Licensed under an MIT license.